Author: Francesco Verdoja

Video on “Probabilistic Surface Friction Estimation Based on Visual and Haptic Measurements”

Watch the video demonstration of our latest paper on friction coefficient estimation

Video Presentation at PAL workshop @ ICRA2020 “On the Potential of Smarter Multi-layer Maps in Robotics”

Watch the video presentation of our latest paper in the Hypermaps project

Hypermaps at PAL ICRA 2020 workshop

We presented the core idea behind the Hypermaps project at the ICRA 2020 workshop on Perception, Action, Learning (PAL)

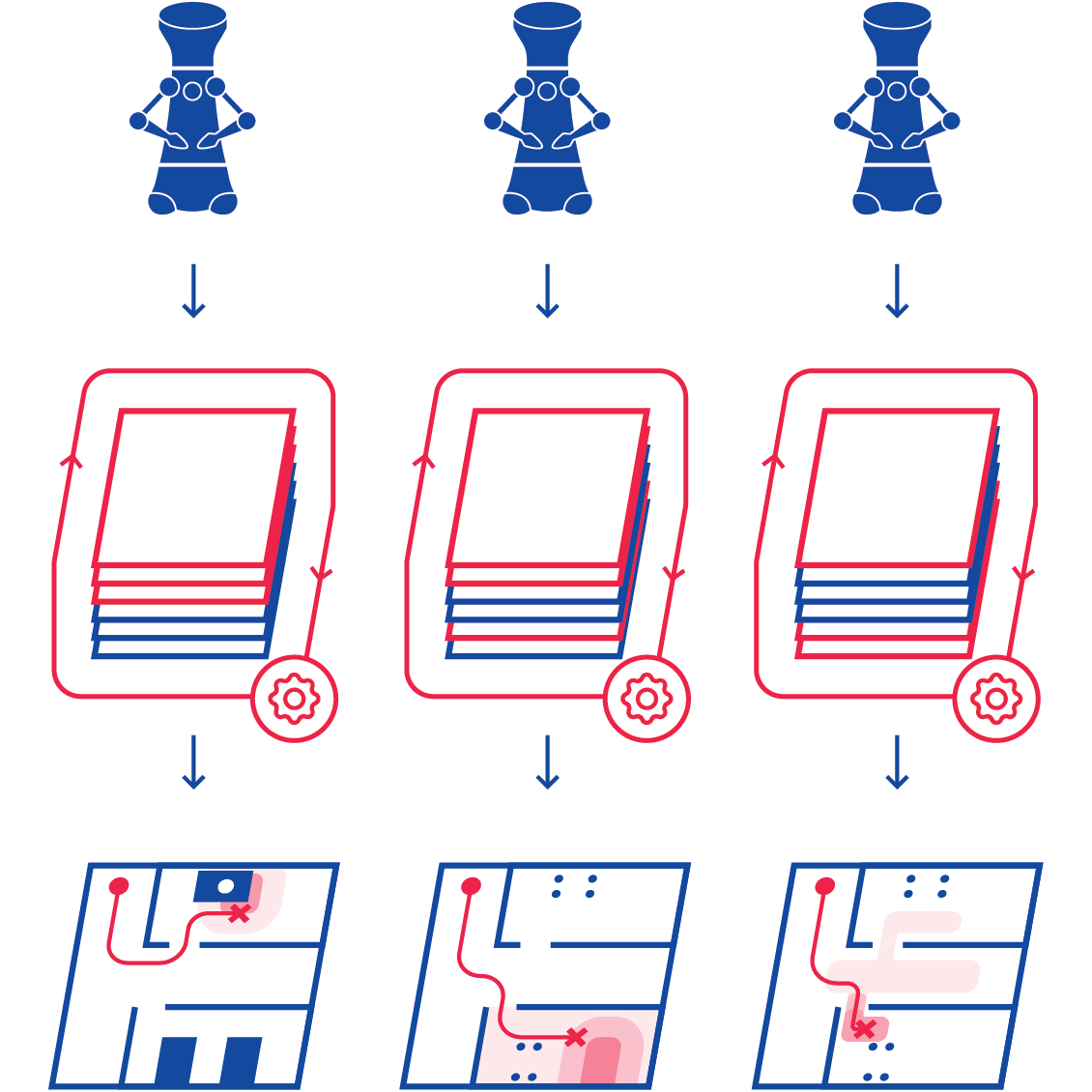

Hypermaps: Closing the complexity gap in robotic mapping

Hypermaps aim at improving how robots manage data about the environment they inhabit.

Occupancy Uncertainty Maps

In this project we research how to build maps which include the uncertainty of the robot over the occupancy of the objects in the environment.

We have shown how the constructed maps can be used to increase global navigation safety by planning trajectories which avoid areas of high uncertainty, enabling higher autonomy for mobile robots in indoor settings.

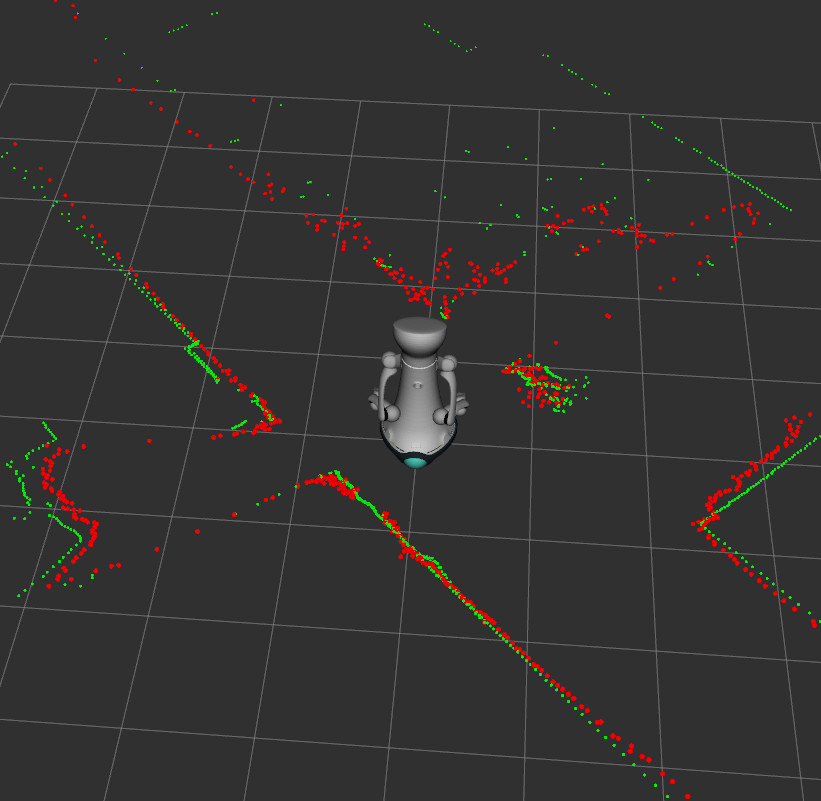

Dataset for “Hallucinating Robots”

We released the dataset for our IROS 2018 paper “Hallucinating Robots: Inferring Obstacle Distances from Partial Laser Measurements”.

Source code for the Hypermaps project

The source code for the article “Hypermap Mapping Framework and its Application to Autonomous Semantic Exploration” is available on github.

Pre-print of “Hypermap Mapping Framework and its Application to Autonomous Semantic Exploration” available on arXiv

Our paper has been uploaded on arXiv

Toy Dataset

The toy-dataset is a new RGB-D dataset captured with the Kinect sensor. The dataset is composed of typical children’s toys and contains a total of 449 RGB-D images alongside with their annotated ground truth images.

Source code for “Hallucinating Robots”

We released the code for our IROS 2018 paper “Hallucinating Robots: Inferring Obstacle Distances from Partial Laser Measurements”.