Category: Uncategorized

Apply for a summer internship!

This year’s topics include foundation models, robotic manipulation and autonomous driving. Read more…

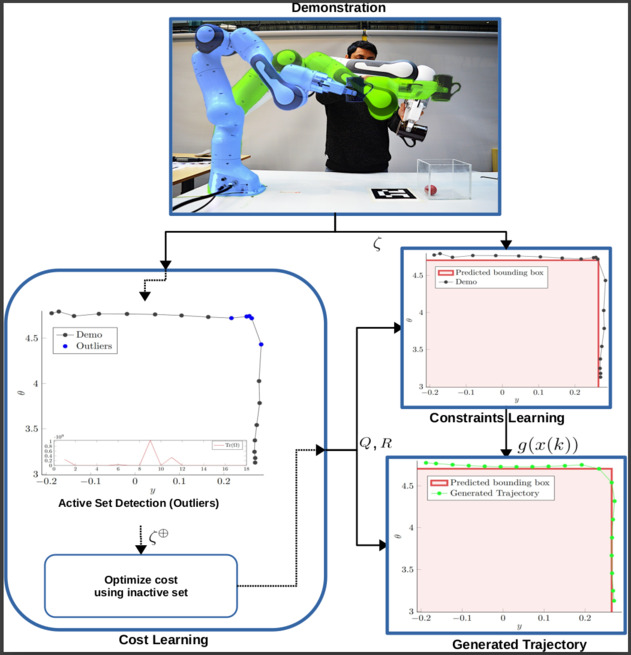

Master Thesis on “Learning From Demonstrations (LfDs) for Robotic Manipulators”

We are currently seeking a motivated and talented master’s student to investigate and improve upon a developed method that learns cost and constraints explicitly as part of their master’s thesis.

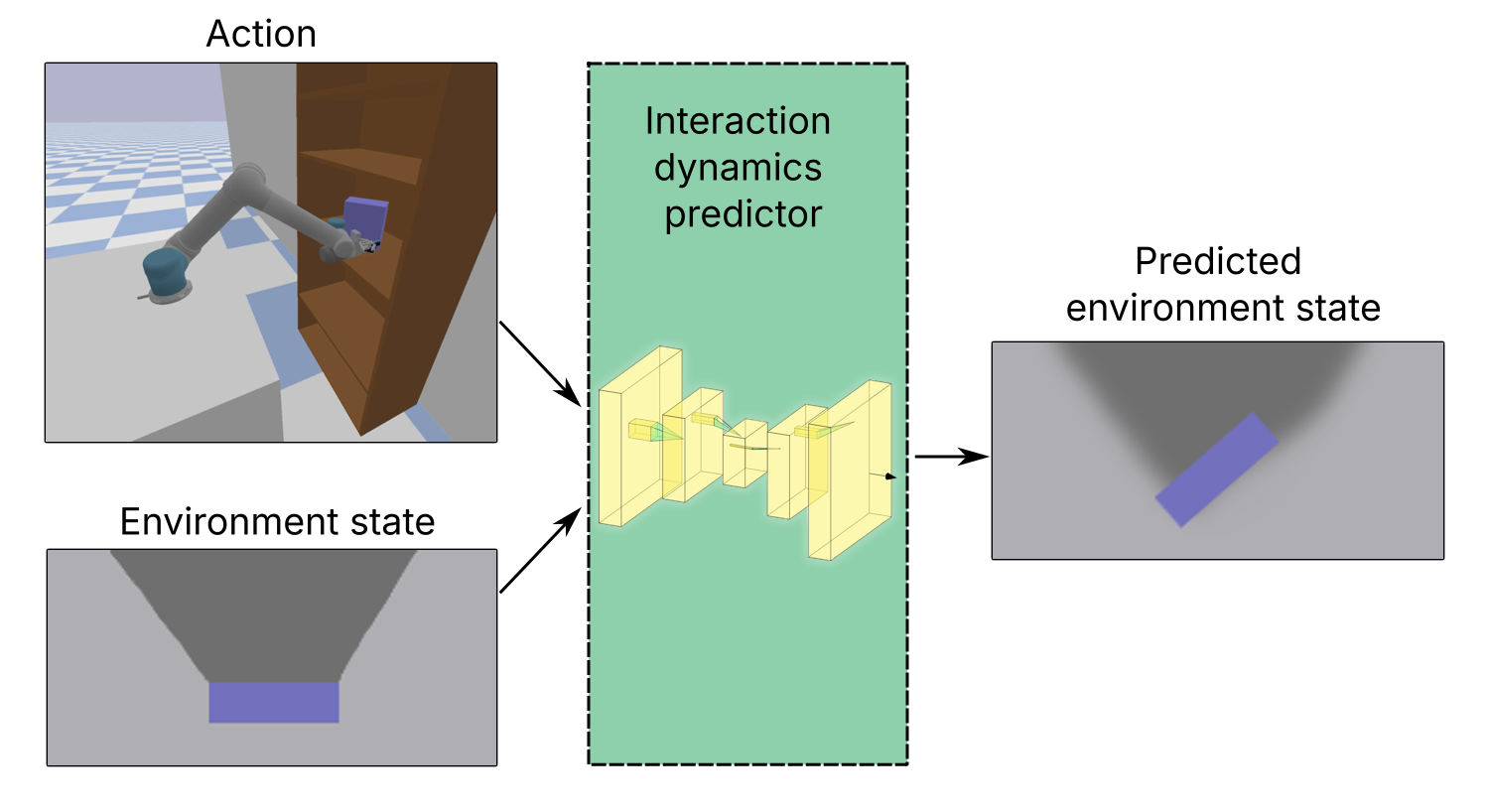

Master’s thesis on “Learning interactive environment dynamics for active search”

In this master’s thesis, the main task is to design a method to predict changes in the state of the environment after a push action. The environment consists of constrained, cluttered space containing rigid objects.

Human-in-the-Loop Shared Control with Guaranteed Safety for Teleoperated Robots

In this project, we develop a shared control framework that guarantees safety using control-invariant sets (CISs), which are computed from the robot’s dynamics and an environmental model. The CISs ensure that unsafe human commands are overridden, while safe commands are executed normally.

Intelligent Robotics @ IROS 2025

We will be attending the 2025 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS 2025) in Hanzhou, China

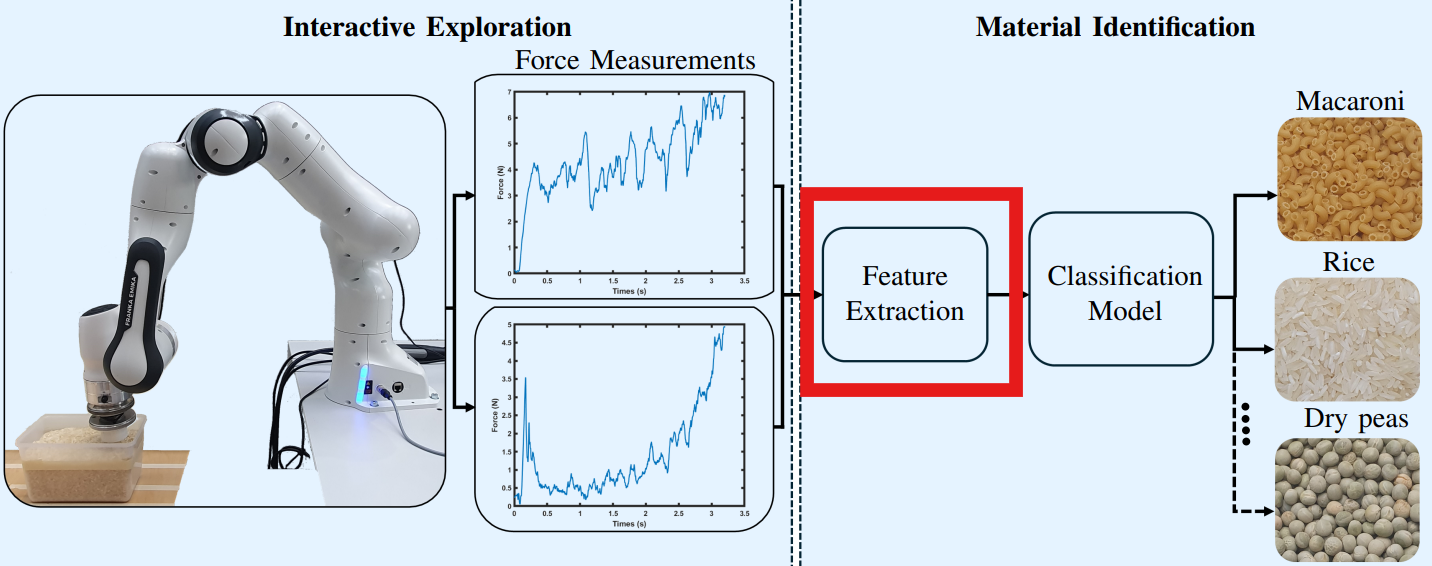

Interactive Identification of Granular Materials using Force Measurements

To be updated. The code and dataset are available at https://github.com/samhyn/granular_identification

Master’s thesis Topic on Analysis of Tactile Signals

We are currently seeking for a motivated and talented master’s student to work on discriminative filtering and feature extraction for classification of tactile signals.

Intelligent Robotics @ IROS 2024

The Intelligent Robotics Group will be at IROS 2024 with six conference papers and two workshops

Meet us at ICRA 2024!

Learn about our recent work at ICRA 2024. Read more!

See you soon at IROS 2023!

The Intelligent Robotics group will attend to IROS 2023 in Detroit. Read more!