Explainable Interactions between Humans and Autonomous Systems

With the growing advancement of robotics research, there is a growing need for people-friendly communication between robots and humans. On one hand, the decisions of the autonomous system need to be understandable to humans, and on the other – humans need to be able to specify commands in a way that is natural to them.

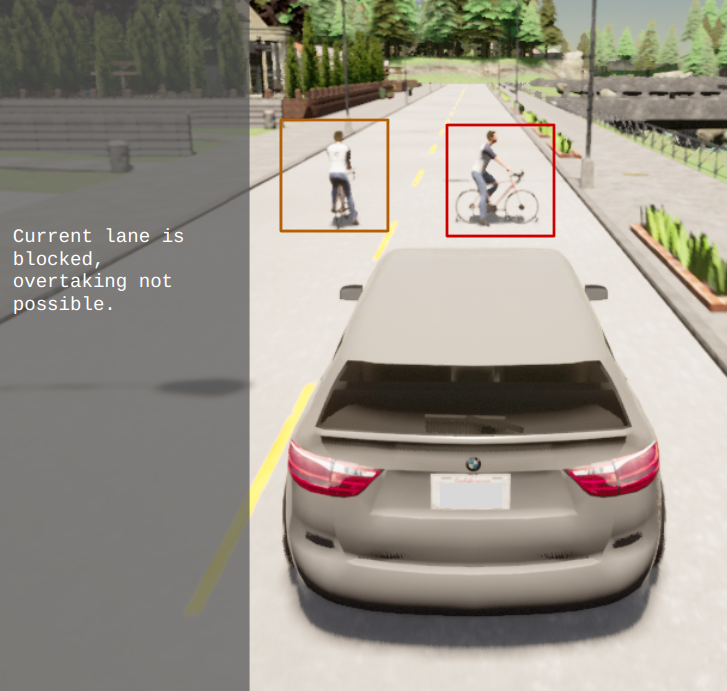

In cases of shared autonomy, where an operator needs to take control in case the autonomous system is uncertain about a situation, our research focuses on automatically generating hypothetical explanations of the model decisions and analysis of which part of the data would be relevant for a human to understand the situation in order to react in a timely manner.

Another direction of the research is allowing humans to specify commands to the autonomous systems in an intuitive way – by using natural language.

People involved

- Tsvetomila Mihaylova (tsvetomila.mihaylova(at)aalto.fi), Postdoctoral researcher.

- Ville Kyrki (ville.kyrki(at)aalto.fi), Professor, group leader.

Project updates

Master Thesis on “Egocentric Gaze Prediction via Self-Supervised Feature Forecasting”

Predicting human gaze—especially in egocentric or driving scenarios—is fundamentally about modeling where people will attend next, not just where they are looking now. This thesis focus on designing and implementing a pipeline that integrates feature forecasting with a gaze prediction module, conduct experiments on egocentric datasets, and systematically evaluate the benefits of future-aware representations.