Author: David Blanco Mulero

Our work “QDP: Learning to Sequentially Optimise Quasi-Static and Dynamic Manipulation Primitives for Robotic Cloth Manipulation” was accepted to IROS 2023!

We are happy to announce that our work “QDP: Learning to Sequentially Optimise Quasi-Static and Dynamic Manipulation Primitives for Robotic Cloth Manipulation” was accepted to IROS 2023.

See you soon at IROS 2023!

The Intelligent Robotics group will attend to IROS 2023 in Detroit. Read more!

Deformable Object Manipulation

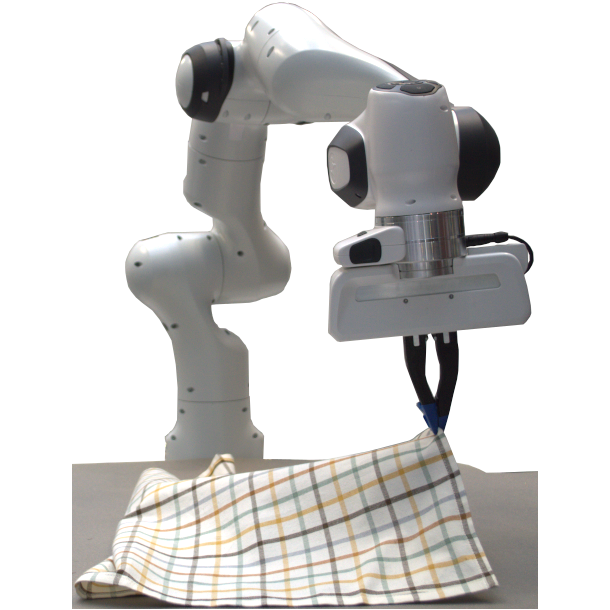

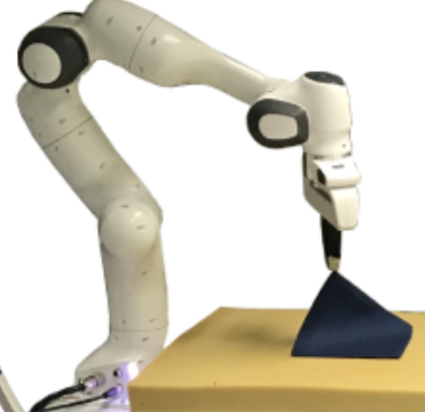

In this project we research on how to manipulate more efficiently deformable objects by using dynamic manipulation as well as the modeling deformable objects via graph structures. Our applications range from manipulation of granular materials such as ground coffee to cloth manipulation.

Participation at the ICRA’23 Cloth Manipulation Challenge

We participated at the Cloth Manipulation and Perception Track at the IEEE ICRA 2023 Robotic Grasping and Manipulation Competition.

Our work “Learning Visual Feedback Control for Dynamic Cloth Folding” was accepted to IROS 2022!

We are happy to announce that our work “Learning Visual Feedback Control for Dynamic Cloth Folding” was accepted to IROS 2022 and nomitated to both the IROS Best Paper award, Best Student Paper award and the IROS Best RoboCup Paper Award.

Manipulation of Granular Materials by Learning Particle Interactions

In this work we propose to use a Graph Neural Network (GNN) surrogate model to learn the particle interactions of granular materials. We perform planning of manipulation trajectories with the learnt surrogate model to arrange the material into a desired configuration.

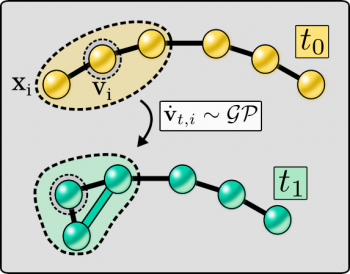

Evolving-Graph Gaussian Processes poster at the Time Series Workshop at ICML 2021

This work extends the current SotA of Graph Gaussian Processes (GGPs) to dynamic graphs and asses the performance of the proposed evolving-Graph Gaussian Process (e-GGP) in two simulated tasks where deformable objects are represented as a graph that evolves over time.

Autonomous Driving

Driverless cars and autonomous driving have shown major progress recently with the use of machine learning to learn driving behaviors from human demonstrations. However, the uptake of these is still limited, especially since the safety of such data-driven solutions is difficult to guarantee or even assess. Our work in autonomous driving targets the question how […]

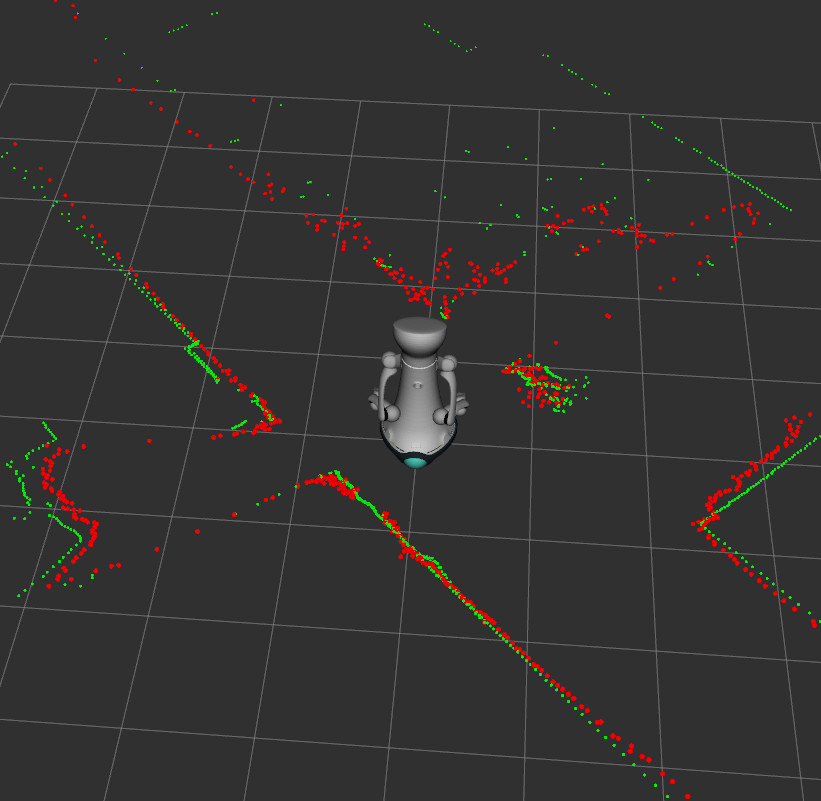

Mapping and Navigation

For any mobile robotic platform, the ability to navigate its environment is important. Avoiding dangerous situations such as collisions and unsafe conditions is a crucial capability to achieve autonomy. Moreover, robots need to maintain a representation of their environment in order to properly operate inside it; this is achieved through maps, often built by the […]

Robotic Perception

Robots’ ability to interact with their surroundings is an essential capability, especially in unstructured human-inhabited environments. The knowledge of such an environment is usually obtained through sensors. The study of acquiring knowledge from sensor data is called robotic perception. Perception is the first step in many tasks such as manipulation or human-robot interaction.