Tag: Master thesis topic

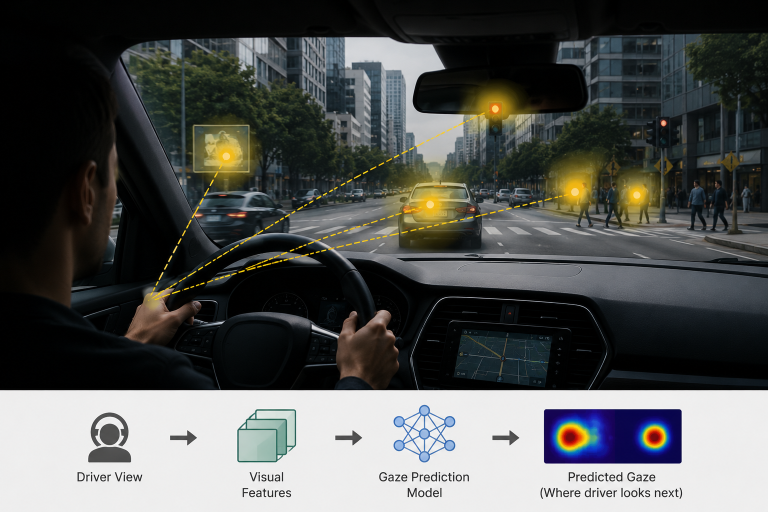

Master Thesis on “Egocentric Gaze Prediction via Self-Supervised Feature Forecasting”

Predicting human gaze—especially in egocentric or driving scenarios—is fundamentally about modeling where people will attend next, not just where they are looking now. This thesis focus on designing and implementing a pipeline that integrates feature forecasting with a gaze prediction module, conduct experiments on egocentric datasets, and systematically evaluate the benefits of future-aware representations.

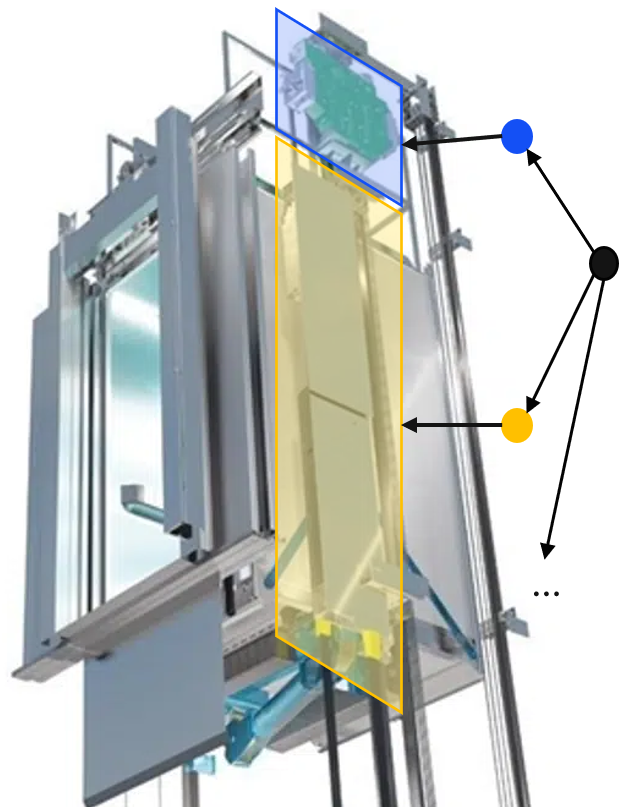

Master Thesis on “Visual segmentation of elevator components with robotic platform”

This thesis focuses on developing and evaluating visual segmentation methods for elevator components using a mobile robotic platform.

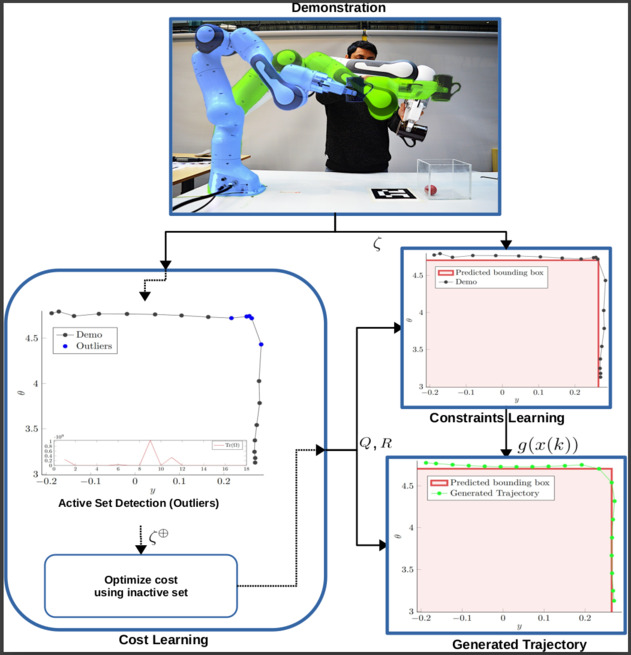

Master Thesis on “Learning From Demonstrations (LfDs) for Robotic Manipulators”

We are currently seeking a motivated and talented master’s student to investigate and improve upon a developed method that learns cost and constraints explicitly as part of their master’s thesis.

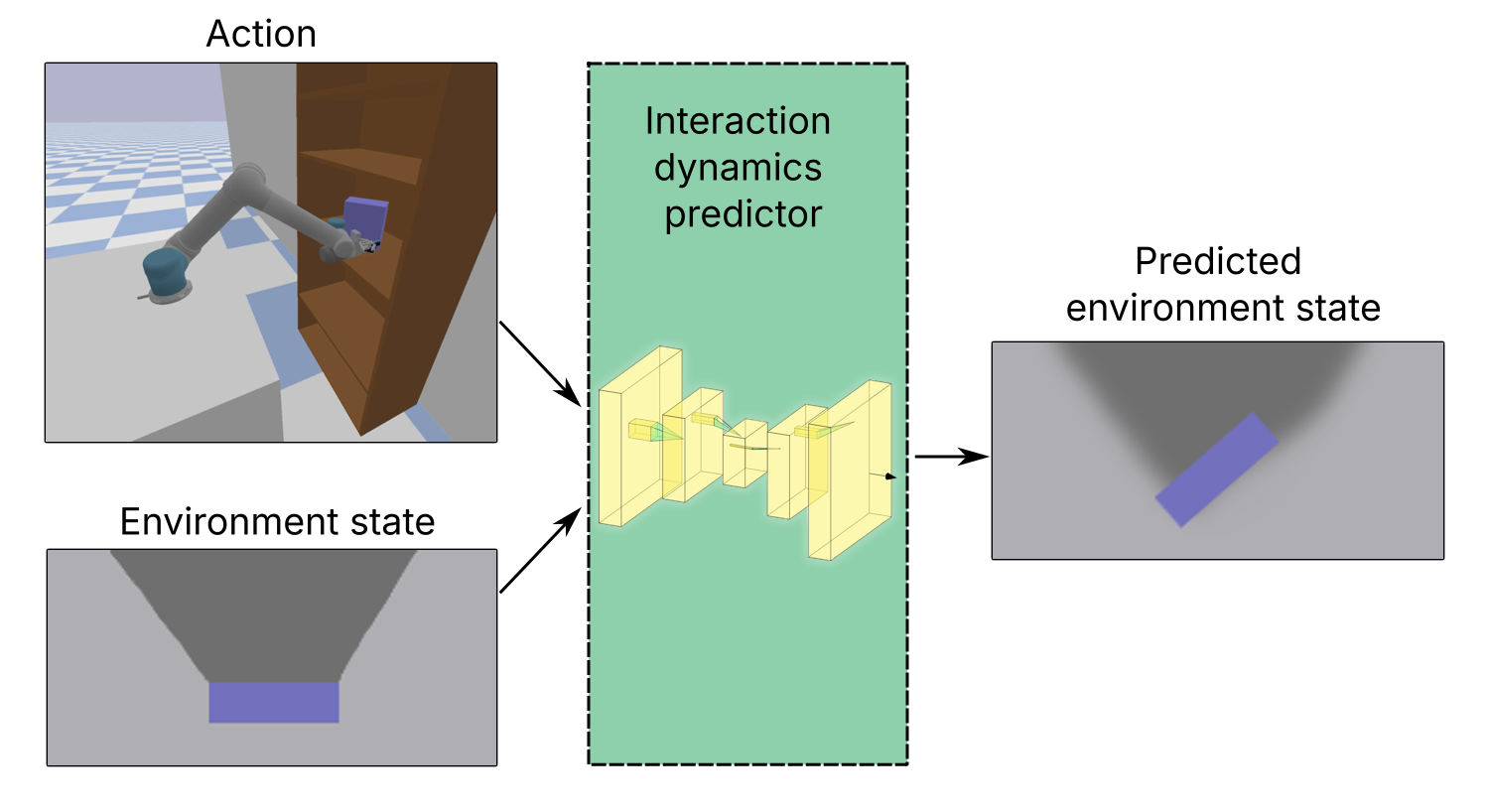

Master’s thesis on “Learning interactive environment dynamics for active search”

In this master’s thesis, the main task is to design a method to predict changes in the state of the environment after a push action. The environment consists of constrained, cluttered space containing rigid objects.

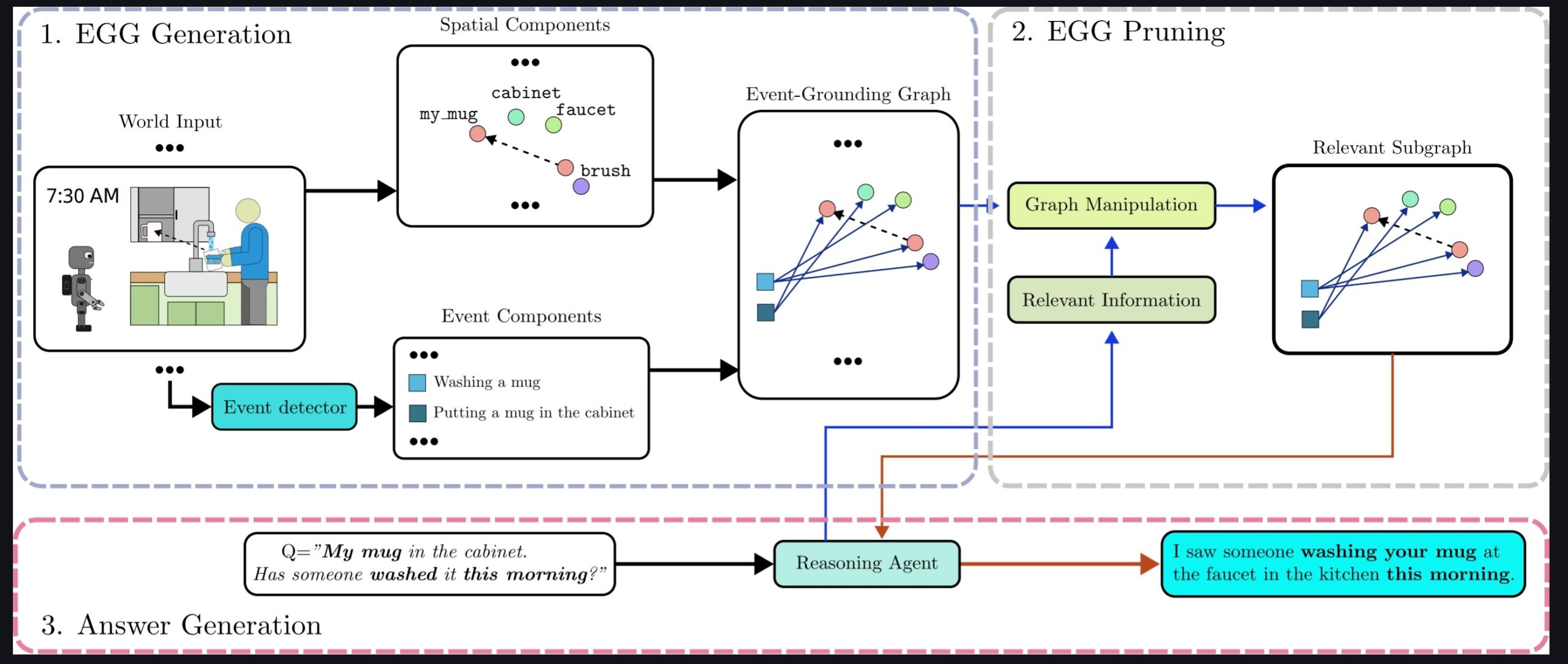

Master’s Thesis on “Tracking of Environmental Changes Using 3D Scene Graphs”

Scene understanding is crucial to advance autonomous robotic systems deployment in the real world. The selected student will focus on developing a framework for constructing 3DSGs capable of recording changes in dynamic environments as they occur.

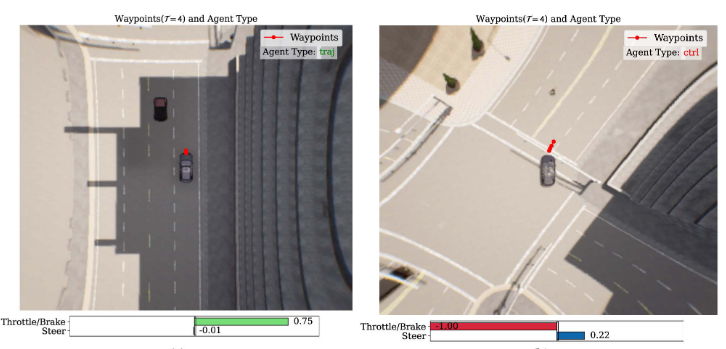

Master Thesis on “Learning Latent Action Policies for Autonomous Driving”

Learning action-conditioned driving dynamics from raw pixels is challenging due to high dimensionality and weak temporal cues. This work combines Latent Action Pretraining (LAPA) with a conditional Diffusion Model to learn discrete latent actions and predict their future evolution. The framework captures multi-modal driving behaviors in latent space, enabling interpretable and data-efficient policy learning for autonomous driving.

Human-in-the-Loop Shared Control with Guaranteed Safety for Teleoperated Robots

In this project, we develop a shared control framework that guarantees safety using control-invariant sets (CISs), which are computed from the robot’s dynamics and an environmental model. The CISs ensure that unsafe human commands are overridden, while safe commands are executed normally.

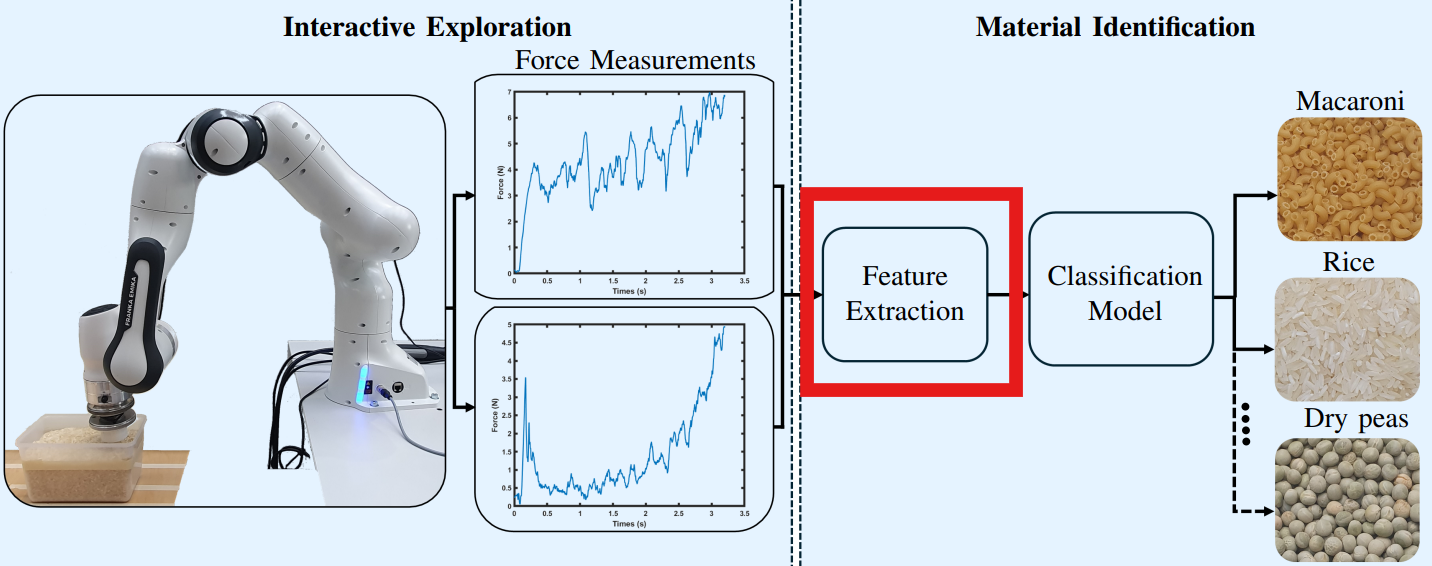

Master’s thesis Topic on Analysis of Tactile Signals

We are currently seeking for a motivated and talented master’s student to work on discriminative filtering and feature extraction for classification of tactile signals.