Master Thesis on “Deep Imitative Models for Safe Planning of Autonomous Driving”

Supervisor: Prof. Ville Kyrki (ville.kyrki@aalto.fi)

Advisor: Dr. Shoaib Azam (shoaib.azam@aalto.fi), Dr. Gökhan Alcan (gokhan.alcan@aalto.fi)

Keywords: imitation learning, model-based reinforcement learning, autonomous driving, safe planning.

Project Description

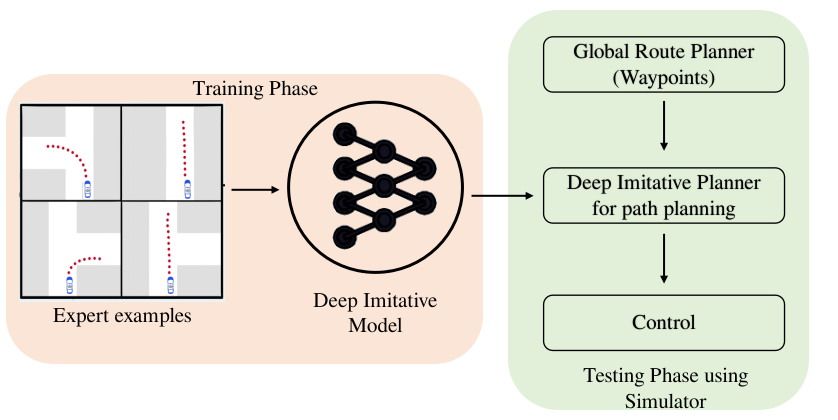

Safety and robustness are critical challenges in the planning of autonomous driving. Learning-based methods that use the imitation learning (IL) approach perform well in a wide range of situations but do not resolve the challenge of safety and robustness. For instance, a learning-based system performs well in domains that resemble those it was trained in but fails unpredictably in novel situations (out of data-distribution examples). In contrast to IL, model-based reinforcement learning (MBRL) methods do not require expert demonstrations and adapt to new tasks by specifying the reward functions. Designing the reward function for MBRL that solves the complex environment for desired behavior is difficult where the space of possible undesirable behaviors is large.

The goal of this thesis is to devise an algorithm that combines the advantage of both IL and MBRL for robust and safe planning for autonomous driving. In this context, the thesis is expected to include implementations of IL and MBRL algorithms and fuse them for planning tasks.

Deliverables

- Review of relevant literature,

- Training and testing of IL and MBRL methods,

- Comparative study for the network architectures and hyperparameters,

- Designing edge-cases for planning of autonomous driving,

- Comparison of the implementation with the state-of-the-art methods.

Practical Information

Pre-requisites: Python(high), Deep Learning (high), and previous experience on RL is a big plus

Tools: PyTorch

Simulators: (up-to-change) Carla, DriverGym, highway-env

Start: Available immediately

References

- Deep imitative models for flexible inference, planning, and control, https://arxiv.org/abs/1810.06544

- R2P2: A ReparameteRized Pushforward Policy for Diverse, Precise Generative Path Forecasting, https://link.springer.com/chapter/10.1007/978-3-030-01261-8_47

- Contingencies from Observations: Tractable Contingency Planning with Learned Behavior Models, https://arxiv.org/pdf/2104.10558.pdf