Semantic map generation in SUMO

Supervisor: Prof. Ville Kyrki (ville.kyrki@aalto.fi).

Advisors: Daulet Baimukashev (daulet.baimukashev@aalto.fi), Dr. Shoaib Azam (shoaib.azam@aalto.fi)

Project Description

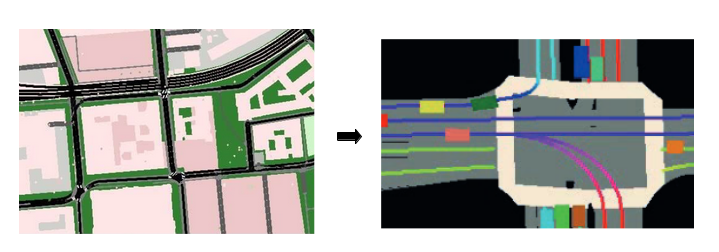

This project aims to extend the functionality of the SUMO simulator with suitable software packages which generate semantic representations and control the vehicles using low-level control actions. This enables integration of data-driven vehicle models. Current vehicle models in SUMO are rule-based and are not suitable for generating diverse driving policies from available observations. Instead, we propose to have vehicle models with learned policies using a semantic representation of their surrounding environment. Bird’s eye view (BEV) is the most popular way of encoding complex and dynamic road information including road structure, traffic lights, and other agents’ motion into semantic representation [1, 2]. This BEV representation is suitable for learning the mapping from images to low-level control commands. While there are available real-world datasets from Waymo, NuPlan, and Lyft with extensive expert data, SUMO allows to generate numerous scenarios and to work with existing real-world maps.

Deliverables

- C++ code packages for generation of semantic maps of the environment and bird’s eye view (BEV) image representation similar to driving datasets

- Rendering of road structure, traffic lights, vehicle positions

- Calibrating the representations with different formats to match available datasets

Practical Information

Pre-requisites: C++, Python

Start: Available immediately

Reference:

- Bansal, M., Krizhevsky, A., & Ogale, A. (2018). Chauffeurnet: Learning to drive by imitating the best and synthesizing the worst. arXiv preprint arXiv:1812.03079

- Bergamini, L., Ye, Y., Scheel, O., Chen, L., Hu, C., Del Pero, L., … & Ondruska, P. (2021, May). Simnet: Learning reactive self-driving simulations from real-world observations. In 2021 IEEE International Conference on Robotics and Automation (ICRA) (pp. 5119-5125). IEEE.