Master Thesis on “Interactive Bayesian Multiobjective Evolutionary Optimization in Reinforcement Learning Problems with Conflicting Reward Functions”

Supervisor: Prof. Ville Kyrki (ville.kyrki@aalto.fi)

Advisor: Dr. Atanu Mazumdar (atanu.mazumdar@aalto.fi), Dr. Kevin Luck (kevin.s.luck@aalto.fii)

Keywords: evolutionary robotics, multiobjective optimization, Bayesian optimization, reinforcement learning

Project Description

In many real-world problems, there are multiple conflicting objective functions that need to be optimized simultaneously. For example, an investment company wants to create an optimum portfolio of stocks to maximize profits and minimize risk simultaneously. However, most reinforcement learning (RL) problems do not explicitly consider the tradeoff between multiple conflicting reward functions and assume a scalarized single objective reward function to be optimized. Multiobjective evolutionary optimization algorithms (MOEAs) can be used to find Pareto optimal policies by considering multiple reward functions as objectives. However, obtaining many Pareto optimal solutions is challenging since training RL models is computationally expensive. In addition, dealing with multiple solutions becomes challenging in the decision-making phase when one optimal policy is applied based on the preferences of a human decision-maker (DM). Interactive optimization algorithms solve both problems by enabling the DM to express his/her preferences during the optimization process and guiding the search in the desired way. The DM can learn about the tradeoffs between the objectives and effectively converge to the final decision solution(s) much quicker than traditional MOEAs.

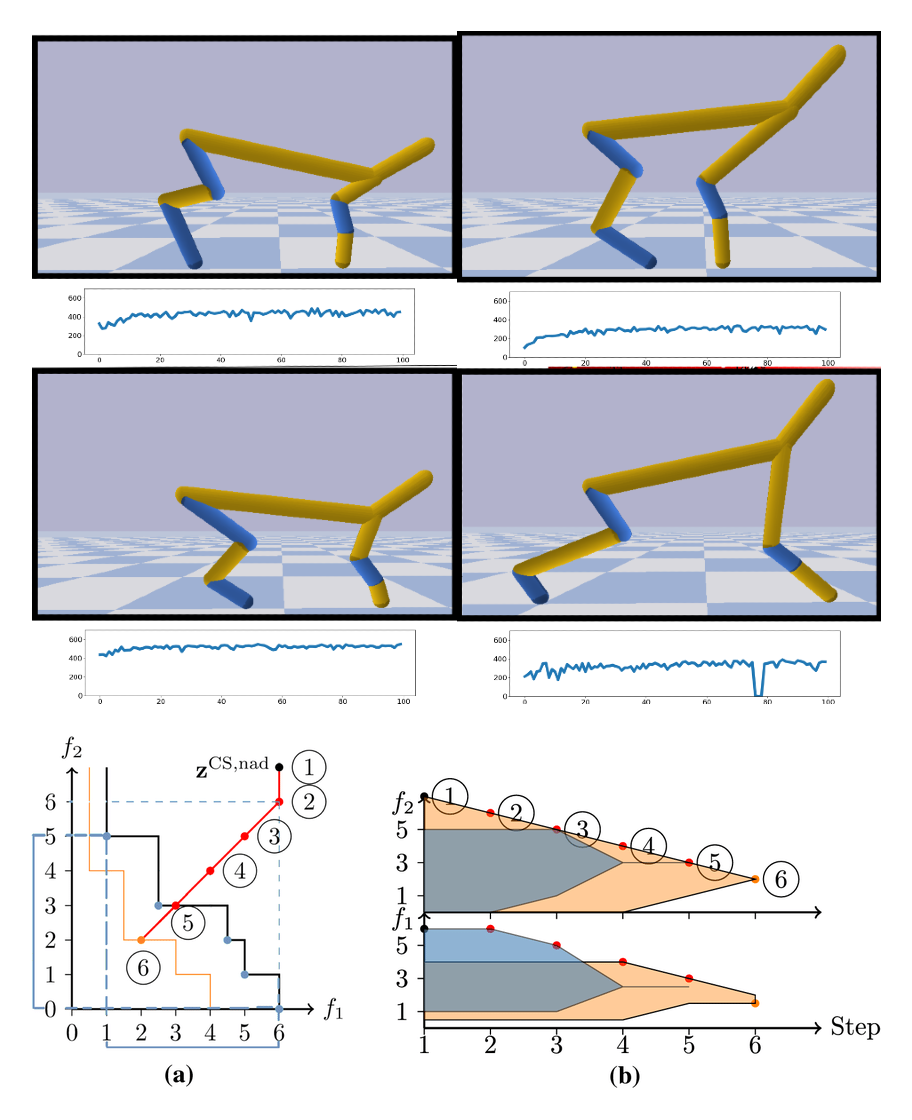

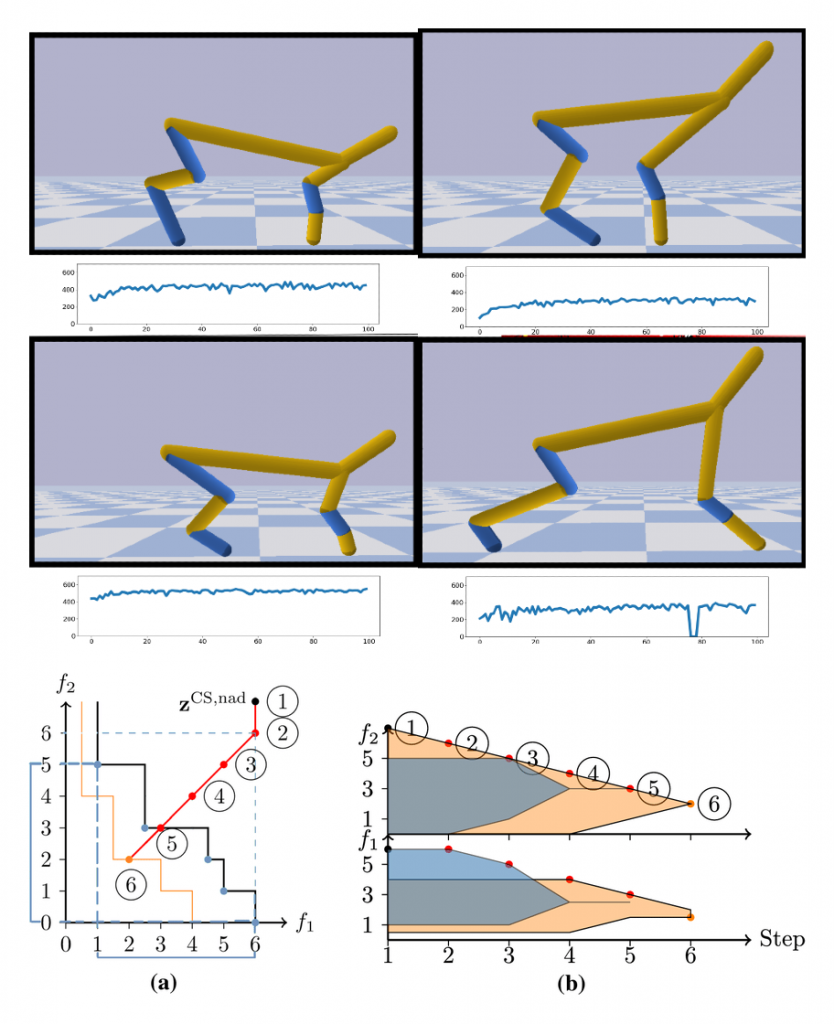

The goal of this thesis is to develop new methods using active learning and interactive Bayesian evolutionary multiobjective optimization algorithms for solving a practical bi-level optimization problem of uncovering optimal designs and behaviors for robots (i.e. leg lengths). In this task, agent designs will be proposed by the optimization method and agents will learn the optimal behavior given design and reward functions. This iterative process is especially expensive to conduct, as the construction and manufacturing of robot designs is a very slow process in the real world. Developing a sample-efficient multiobjective optimization method capable of finding optimal designs for (conflicting) sets of reward functions would provide another stepping-stone for real world co-design of robots.

Deliverables

- Review of relevant literature

- Training and testing different RL methods with conflicting reward functions

- Proposing and evaluating a multi-objective optimization method capable to optimize for (conflicting) sets of reward/Value functions

- Demonstration of method in a simulated co-design task

Practical Information

Pre-requisites: Python, Deep Learning. Previous experience on IRL is a big plus

Tools to be used: PyTorch, DESDEO, PyGMO

Simulators: Gym, Mujoco/Pybullet

Start: Available immediately

References

- C. A. Coello Coello (2018), “Evolutionary multi-objective optimization: a historical view of the field. IEEE Computational Intelligence Magazine, 1, 28-36.

- Y. Jin, H. Wang, T. Chugh, D. Guo and K. Miettinen (2019), “Data-Driven Evolutionary Optimization: An Overview and Case Studies,” in IEEE Transactions on Evolutionary Computation, 23, 442-458.

- C. F. Hayes, et.al (2022), “A practical guide to multi-objective reinforcement learning and planning”, Autonomous Agents and Multi-Agent Systems, 36, 26.

- K. S. Luck, B. A. Heni, and C. Roberto(2020) “Data-efficient co-adaptation of morphology and behaviour with deep reinforcement learning.” Conference on Robot Learning. PMLR.

- J. Ogawa (2019), “Evolutionary Multi-objective Optimization for Evolving Soft Robots in Different Environments”, in Compagnoni, A., Casey, W., Cai, Y., Mishra, B. (eds) Bio-inspired Information and Communication Technologies.