Master thesis on “Development of data-driven driver model”

Supervisor: Prof. Ville Kyrki (ville.kyrki@aalto.fi)

Advisor: Daulet Baimukashev (daulet.baimukashev@aalto.fi), Shoaib Azam (shoaib.azam@aalto.fi)

Keywords: imitation learning, autonomous driving

Data-driven driver models are superior to rule-based models in interactive multi-agent scenarios where it is essential to consider agents’ behavior. For example, humans have diverse driving styles as aggressive, neutral, or defensive [1] and it is challenging to specify them as many factors from the surrounding environment should be considered. However, these complex interactions in the real world can be extracted from the datasets and used for developing better driving policies [2, 3]. Incorporating human-like driving approaches to autonomous vehicles (AV) is key to improving the predictability and acceptance of AVs.

Project Description

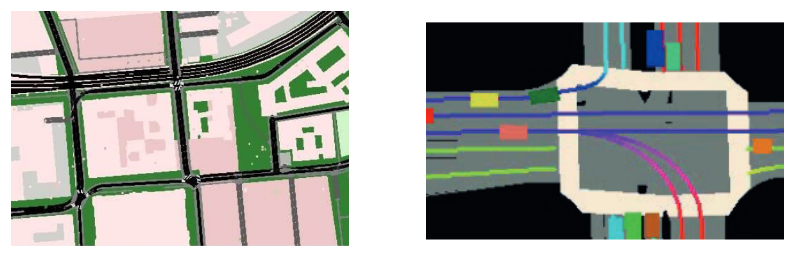

This thesis aims to develop data-driven driver models using expert data. Many real-world driving datasets with diverse driving scenarios and closed-loop evaluation frameworks are currently available from Waymo, NuPlan, and Lyft. In this thesis, different learning methods, such as imitation learning and offline reinforcement learning, will be studied. For the initial empirical evaluation of the proposed method, the NuPlan framework will be utilized. The proposed driver model will then be integrated into SUMO [4] to extend the simulator with more realistic driving policies and compare it against available rule-based driver models.

Deliverables

- Literature review of current works

- Training different learning methods for data-driven driver model

- Comparing to existing driver models (IDM, MOBIL)

- Incorporating trained models into SUMO

Practical Information

Pre-requisites: C++, Python, Pytorch, experience with Machine Learning/Reinforcement Learning

Simulators: SUMO, Lyft/Smarts/NuPlan

Start: Available immediately

References

- J. Karlsson, S. van Waveren, C. Pek, I. Torre, I. Leite and J. Tumova, “Encoding Human Driving Styles in Motion Planning for Autonomous Vehicles,” 2021 IEEE International Conference on Robotics and Automation (ICRA), 2021, pp. 1050-1056, doi: 10.1109/ICRA48506.2021.9561777.

- Bansal, M., Krizhevsky, A., & Ogale, A. (2018). Chauffeurnet: Learning to drive by imitating the best and synthesizing the worst. arXiv preprint arXiv:1812.03079.

- Bergamini, L., Ye, Y., Scheel, O., Chen, L., Hu, C., Del Pero, L., … & Ondruska, P. (2021, May). Simnet: Learning reactive self-driving simulations from real-world observations. In 2021 IEEE International Conference on Robotics and Automation (ICRA) (pp. 5119-5125). IEEE.

- P. A. Lopez et al., “Microscopic Traffic Simulation using SUMO,” 2018 21st International Conference on Intelligent Transportation Systems (ITSC), 2018, pp. 2575-2582, doi: 10.1109/ITSC.2018.8569938.