Hypermaps: Closing the complexity gap in robotic mapping

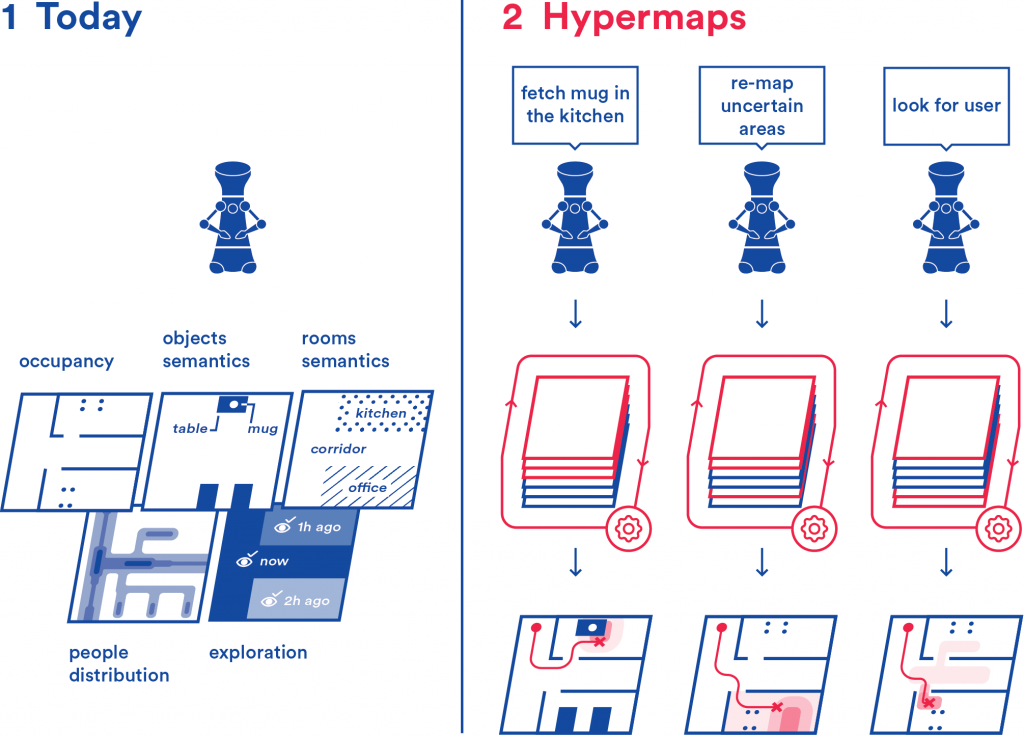

Hypermaps improves how robots manage data about the environment they inhabit. The most common way for robots to handle environmental information is by using maps.

At present, each different kind of data is hosted on a separate map. Similarly to how our use of maps have evolved from single-purpose maps (geographical, political, road map, etc.) to multi-layer maps (like Google Maps) which present us task-relevant information automatically, we propose a multi-layer mapping framework for robots.

Hypermaps simplifies the way robots access maps and helps them correlate information from different maps. This enables robots to increase their ability to understand the world around them, perform more advanced tasks than what they can today, better understand user requests, and autonomously correct their knowledge about the environment. The research is conducted in Aalto University, in collaboration with University of Bonn, Technical University of Munich, KONE ltd, and GIM Robotics.

People involved

- Francesco Verdoja (francesco.verdoja@aalto.fi), staff scientist

- Ville Kyrki (ville.kyrki@aalto.fi), professor

Project updates

Master Thesis on “Visual segmentation of elevator components with robotic platform”

This thesis focuses on developing and evaluating visual segmentation methods for elevator components using a mobile robotic platform.

Master’s Thesis on “Tracking of Environmental Changes Using 3D Scene Graphs”

Scene understanding is crucial to advance autonomous robotic systems deployment in the real world. The selected student will focus on developing a framework for constructing 3DSGs capable of recording changes in dynamic environments as they occur.

Academy of Finland Research Fellowship awarded to the Hypermaps project

Francesco Verdoja was awarded a Research Fellowship on his project “Hypermaps: closing the complexity gap in robotic mapping”

Video Presentation at PAL workshop @ ICRA2020 “On the Potential of Smarter Multi-layer Maps in Robotics”

Watch the video presentation of our latest paper in the Hypermaps project

Hypermaps at PAL ICRA 2020 workshop

We presented the core idea behind the Hypermaps project at the ICRA 2020 workshop on Perception, Action, Learning (PAL)

Source code for the Hypermaps project

The source code for the article “Hypermap Mapping Framework and its Application to Autonomous Semantic Exploration” is available on github.

Pre-print of “Hypermap Mapping Framework and its Application to Autonomous Semantic Exploration” available on arXiv

Our paper has been uploaded on arXiv