Deformation-Aware Data-Driven Grasp Synthesis

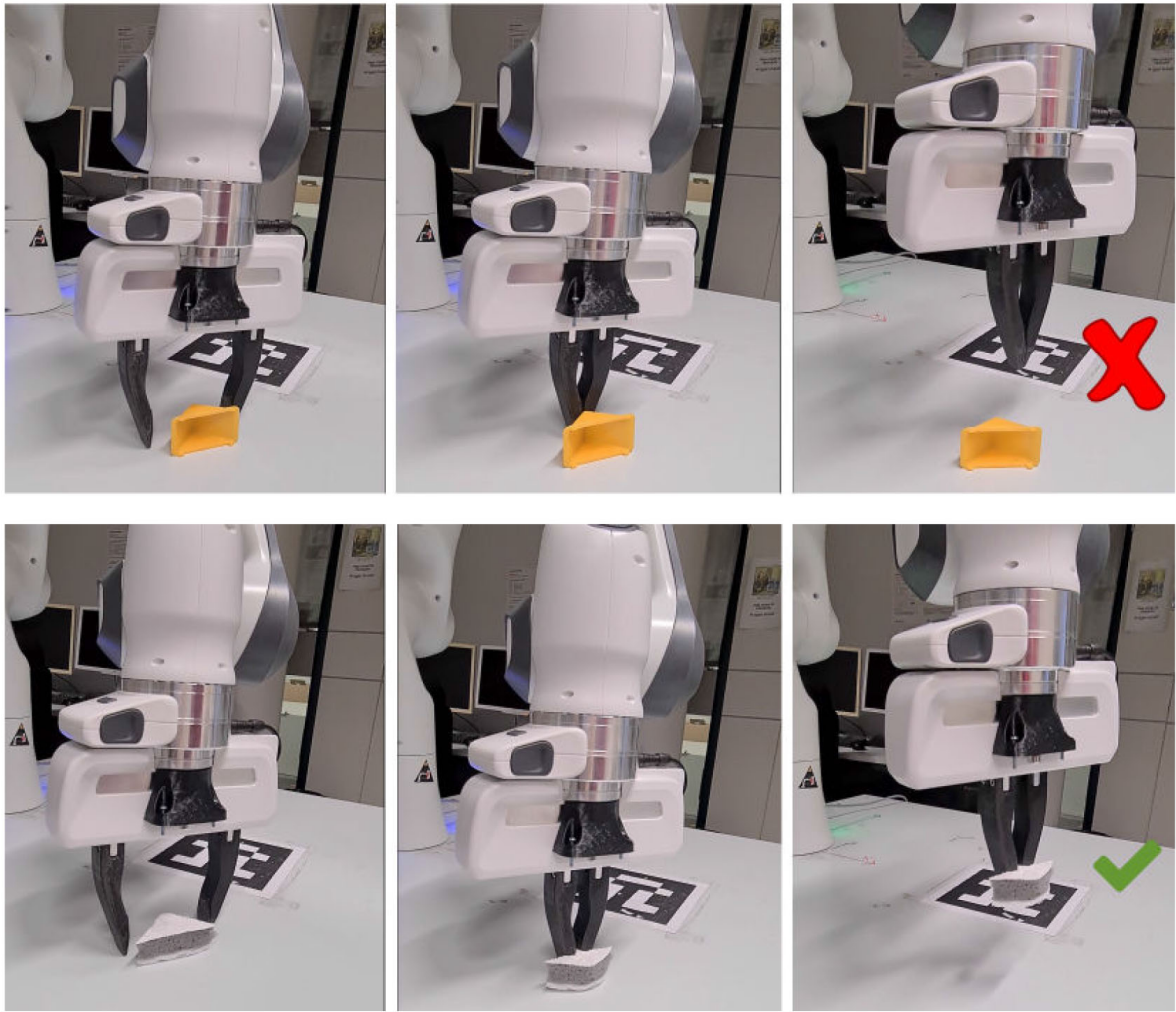

Abstract: Grasp synthesis for 3-D deformable objects remains a little-explored topic, most works aiming to minimize deformations. However, deformations are not necessarily harmful—humans are, for example, able to exploit deformations to generate new potential grasps. How to achieve that on a robot is though an open question. This letter proposes an approach that uses object stiffness information in addition to depth images for synthesizing high-quality grasps. We achieve this by incorporating object stiffness as an

additional input to a state-of-the-art deep grasp planning network. We also curate a new synthetic dataset of grasps on objects of varying stiffness using the Isaac Gym simulator for training the network. We experimentally validate and compare our proposed approach against the case where we do not incorporate object stiffness on a total of 2800 grasps in simulation and 560 grasps on a real Franka Emika Panda. The experimental results show significant improvement in grasp success rate using the proposed

approach on a wide range of objects with varying shapes, sizes, and stiffnesses. Furthermore, we demonstrate that the approach can generate different grasping strategies for different stiffness values.

Together, the results clearly show the value of incorporating stiffness information when grasping objects of varying stiffness.

Link to paper: IEEE

Code is available here

Citation:

@ARTICLE{tran2022defggcnn,

author={Nguyen Le, Tran and Lundell, Jens and Abu-Dakka, Fares J. and Kyrki, Ville},

journal={IEEE Robotics and Automation Letters},

title={Deformation-Aware Data-Driven Grasp Synthesis},

year={2022},

volume={7},

number={2},

pages={3038-3045},

doi={10.1109/LRA.2022.3146551}}

Video: